SAN FRANCISCO – Large language models (LLMs) refer to a type of AI algorithm that uses deep learning (DL) techniques and large datasets – usually petabytes in size – to understand, summarize, generate, and predict new content. LLMs are becoming increasingly popular thanks to their wide range of uses, among them as conversational chatbots and in translation, text generation, and content summary.1

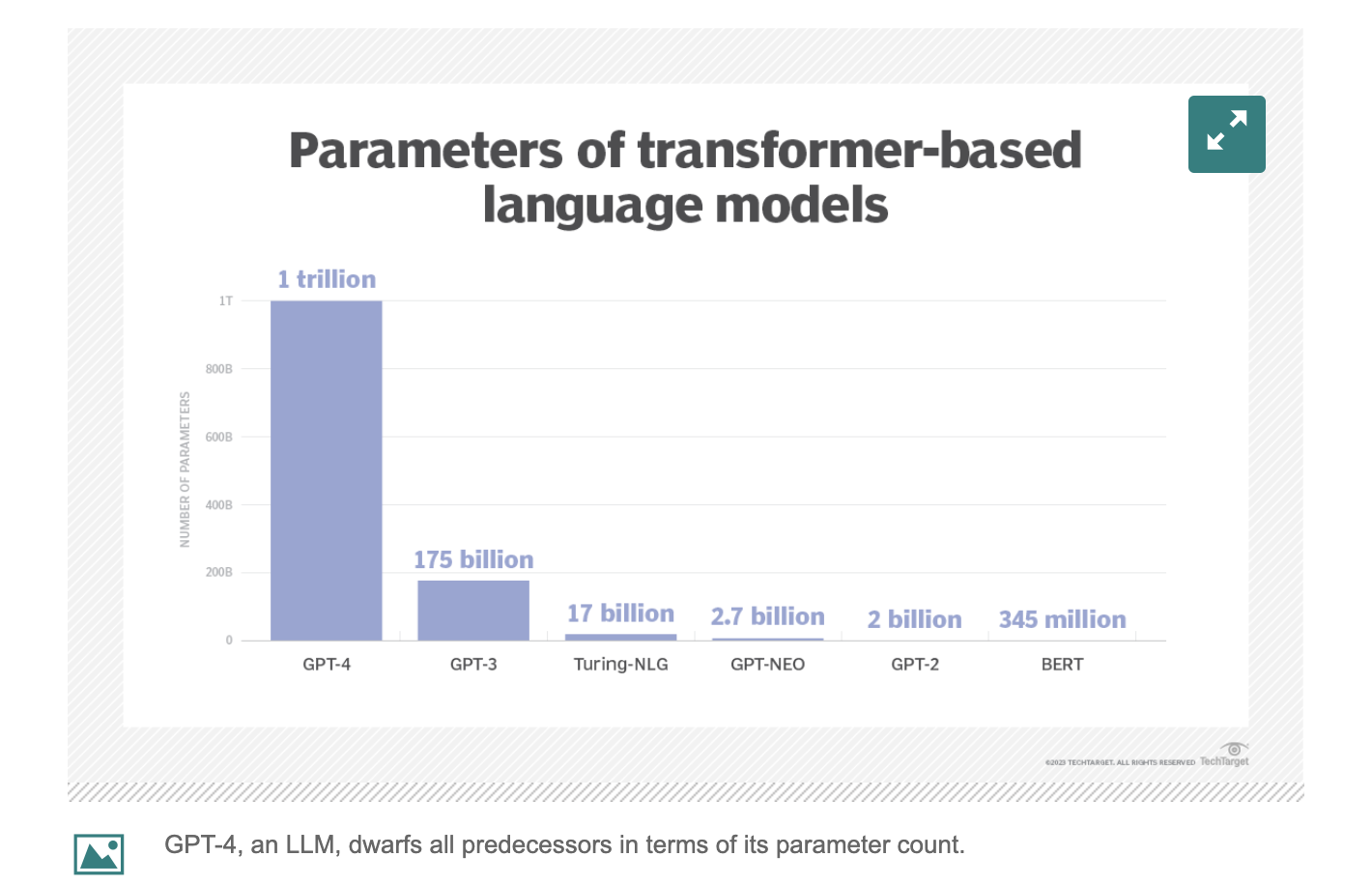

As the following diagram shows, LLMs have significantly more parameters embedded into their models than conventional ones – which increases the size of an LLM’s memory, as well as its performance.

Self-instruction bolsters LLMs

Much recent research focuses on building models that follow natural language instructions, e.g., figuring out the zip code for an address. These developments are powered by two key components: large pre-trained language models and human-written instructions. Collecting such instruction data is costly and often does not yield enough diversity as a result, especially as compared to the increasing demand for this developing technology. Self-instructing was thus devised as a solution to this issue by automating the instruction-data creation process.2

Workings of self-instruction

Self-instructing typically begins by compiling a small number – e.g., 175, as demonstrated below – of manually written instructions. The model is prompted to generate more instructions for new tasks by using the 175 original instructions given, and also creates potential inputs and outputs for the newly generated tasks. Various heuristics automatically filter out low-quality, repeated instructions, and add valid ones to the task pool. This process is then iterated until there are many tasks. Every time new instructions are generated, this enables the model to cover a broader range of topics because the data it is working with have expanded.

LLMs unburden cardiologists

Transfer learning allows LLMs to integrate into healthcare settings more easily, leveraging pre-trained models as a starting point for further training and adaptation to medical domains. Applying cardiovascular-specific fine-tuning – which involves training pre-trained LLMs on relevant data so they perform well on tasks within the field – ensures models have the most relevant and up-to-date medical knowledge at their disposal.3

LLMs diagnose heart disease, generate ECG diagnosis report

Figure 3, above, represents the architecture of how LLM flows of embeddings work and how this can be generated into ground-truth embeddings.

The methodology for generating reports with LLMs is as follows: Given that electrocardiogram (ECG) signals x = [x1, x2, … xt], learn a generated text embedding L = [L1, L2, … Lt], which is then decoded into natural language as reports or directly used for disease classification. In this study, the zero-shot classification approach achieved competitive performance with supervised learning baselines. Testing was done for five conditions, including normal ECGs and myocardial infarctions.4

LLMs in cardiovascular image segmentation

Cardiac magnetic resonance image segmentation is a non-invasive imaging technique that visualizes the structures within and around the heart – a process that AI is making more efficient, accurate, and cost effective. Segmentation methods often rely on landmarks or key points that anchor a contour to specific and well-defined anatomical points, such as the apex of the left ventricle (LV). Models are trained to distinguish the target anatomical landmark and how to localize it by learning and following the optimal navigation path through the volume. One segmentation method involved testing 5,000 three-dimensional computed tomography volumes, achieving complete accuracy at detecting landmarks. Once specific landmarks are determined, AI finds the orientation and scale of the LV and infers its shape based on the appearances and shapes in the database on which it was trained. To track myocardial motion, segmentation is followed by a temporal tracking algorithm that follows the myocardial border across frames.5

Projections for digital health investments in 2023 show interesting trends

Limitations, other considerations

AI was underutilized for a long time – and largely non-existent before that – but now overreliance is a greater risk: AI should be augmenting human expertise, not replacing it. This is especially true because the accuracy and reliability of LLM-generated output – which is of paramount importance in healthcare – cannot yet be guaranteed.

Rigorous validation processes, continuous monitoring, and collaboration with medical experts are thus essential, making implementation expensive and resource intensive. Transparency in the development and deployment of LLMs is also crucial to ensure their ethical use and keep public opinion from souring on the tech.

Figure 4 above illustrates steps to take when implementing LLMs and what to keep in mind when developing them

LLMs have clearly already emerged as a revolutionary force that is here to stay, transforming industries and reshaping the future of AI-driven technologies. These powerful algorithms – fueled by DL techniques and vast, seemingly ever-growing datasets – are unlocking new possibilities that are poised to continue expanding, with healthcare being one of the biggest beneficiaries of this trend.

References:

1. Kerner, Sean Michael. What Is a Large Language Model (LLM)? – TechTarget Definition. WhatIs.Com, 7 Apr. 2023, http://www.techtarget.com/whatis/definition/large-language-model-LLM

2. Kordi, Yeganeh, et al. SELF-INSTRUCT: Aligning Language Models With Self-Generated Instructions, 25 May 2023, arxiv.org/pdf/2212.10560.pdf

3. Karabacak, Mert, and Margetis, Konstantinos. Embracing Large Language Models for Medical Applications: Opportunities and Challenges. Cureus, 21 May 2023, http://www.cureus.com/articles/149797-embracing-large-language-models-for-medical-applicat ions-opportunities-and-challenges#!/

4. Han, William, et al. Transfer Knowledge from Natural Language to Electrocardiography: Can We …, 4 Feb. 2023, aclanthology.org/2023.findings-eacl.33.pdf

5. Dey, Damini, et al. Artificial Intelligence in Cardiovascular Imaging: JACC State-of-the-Art Review. Journal of the American College of Cardiology, 26 Mar. 2019, http://www.ncbi.nlm.nih.gov/pmc/articles/PMC6474254/